Basic steps:

- Create a new database in the Windows Azure Control Panel

- Installing the ODBC driver for PostgreSQL

- Creating a project in SQL Server Data Tool

- Data import

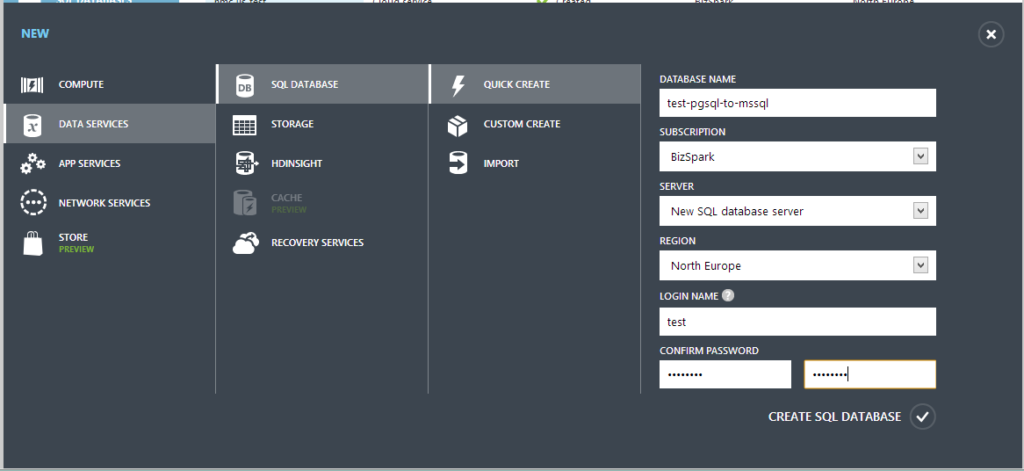

Create a new database in the Windows Azure Control Panel

To create a new database, go to the Windows Azure Control Panel (https://manage.windowsazure.com/). Even if you do not have a subscription, you can use the trial period – $200 for one month, this is more than enough for various experiments. Click the + New button at the bottom left and select Data Services > SQL Database > Quick Create. Fill in the required fields – database name, data center region and login / password to access the database:

After a moment, a message should appear that the database creation was successful:![]()

By default, a database is created with a maximum size of 1GB – after reaching the upper limit, the database goes into read-only mode. However, if necessary, the size can be increased up to 150GB. Installing the ODBC driver for PostgreSQL Now we need to install the ODBC driver for postgresql. Download the latest version from the official site (download the 32-bit version, there are fewer problems with it). Attention! The driver must be installed on the computer on which the data will be copied later, and not on the database server.

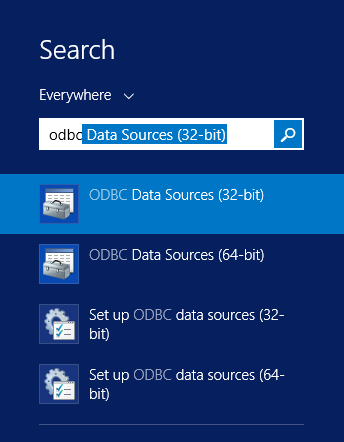

Installation is elementary and does not cause any problems – Next-Next-Next-Finish. After that, you need to add an ODBC Source for both PostgreSQL and SQL Database – just type odbc on the start screen and select ODBC Data Sources (32-bit) from the list

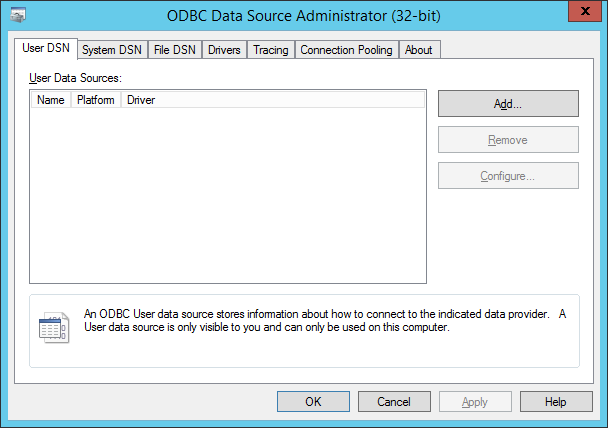

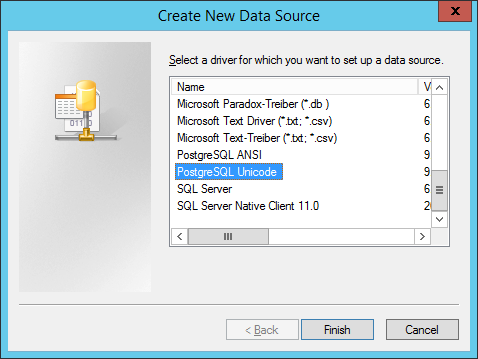

In the window that appears, click Add…, select PostgreSQL Unicode from the list, and click Finish

In the window that appears, click Add…, select PostgreSQL Unicode from the list, and click Finish

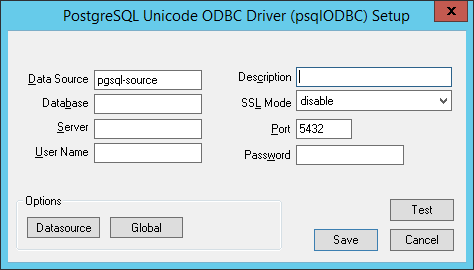

After that, enter all the required data:

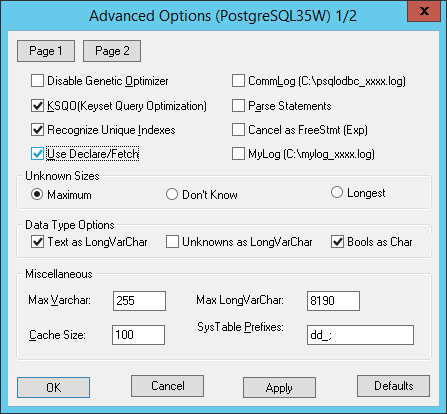

Now one of the points for which this article was written – on this window, click the Datasource button and be sure to check the Use Declare / Fetch checkbox.

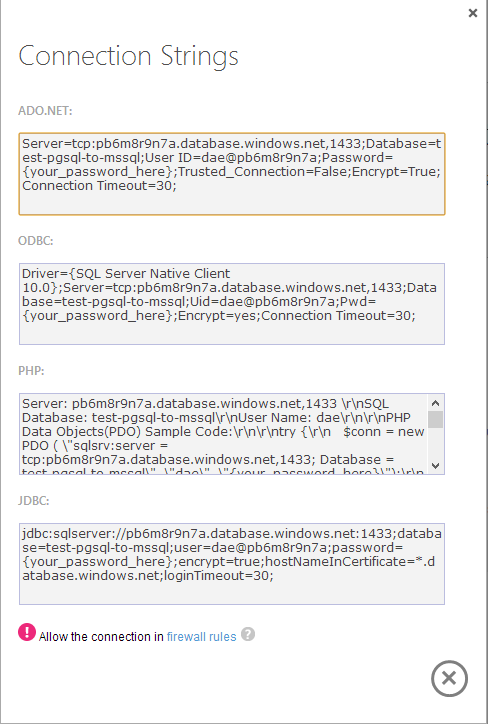

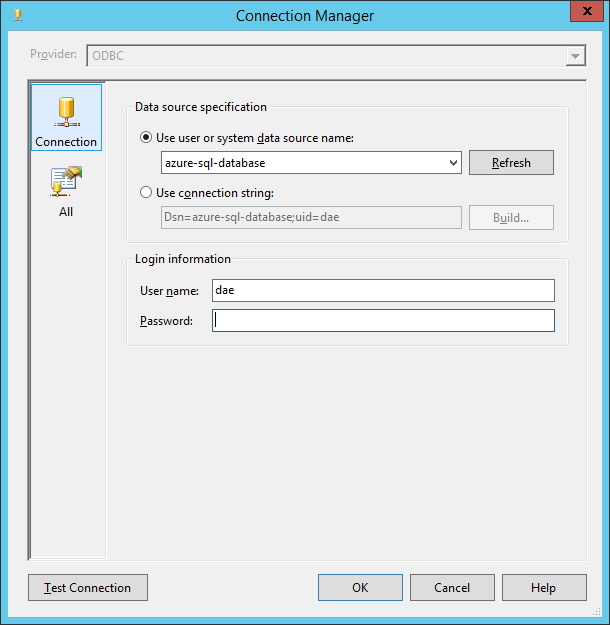

Without this, everything will work while there is little data, but it will crash with an Out of memory error on large volumes – for example, I had this error on a table with approximately 60 million records. Now also create a Data Source for the destination server in the cloud – only select SQL Server Native Client from the list, and connection data can be viewed in the server control panel by clicking “View SQL Database connection strings for ADO .Net, ODBC, PHP, and JDBC “. And, as this window reminds us, we must not forget to allow connections to the database from this IP address – by default, a connection is possible only from IP addresses of Windows Azure services (and even if this option is enabled in the server settings)

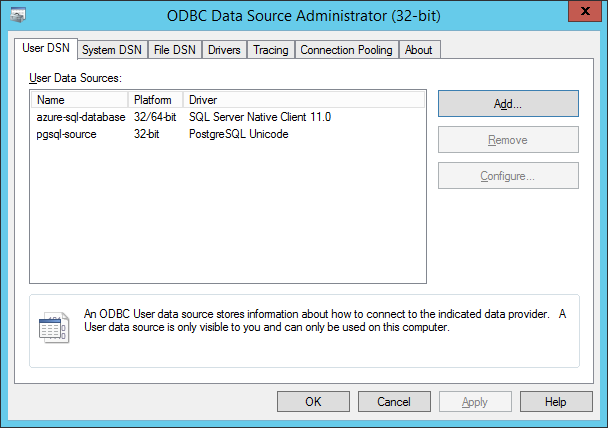

The setup process is also not difficult, and after that you will have something like this:

Creating a project in SQL Server Data Tool

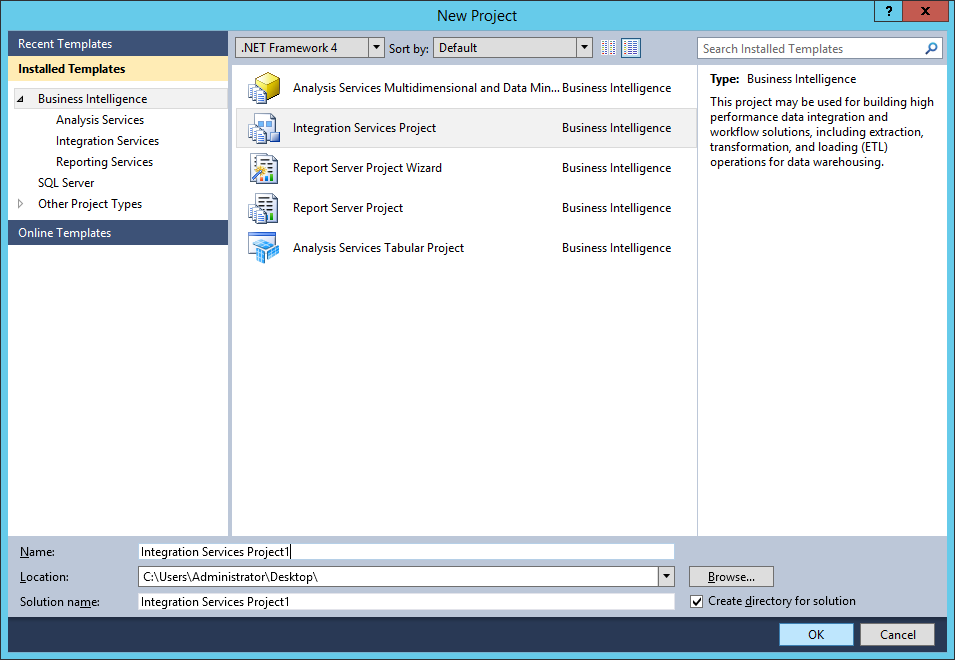

If the migration happened with MySQL, then everything would be easier – there is a wonderful tool SQL Server Migration Assistant (SSMA), which supports, among other things, MySQL, and allows you to migrate very simply and quickly. But there is no such thing for PostgreSQL, so you will have to use the SQL Server Data Tool (which, however, is also not difficult). Launch SQL Server Data Tool, click New Project and create a project based on the Integration Service Project template

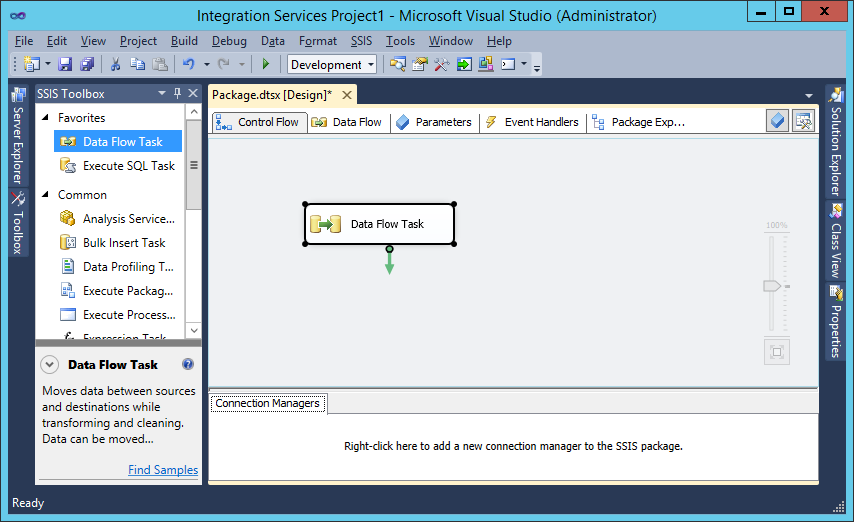

Drag and drop a Data Flow Task from the Toolbox, double click on it and you will be taken to the Data Flow tab.

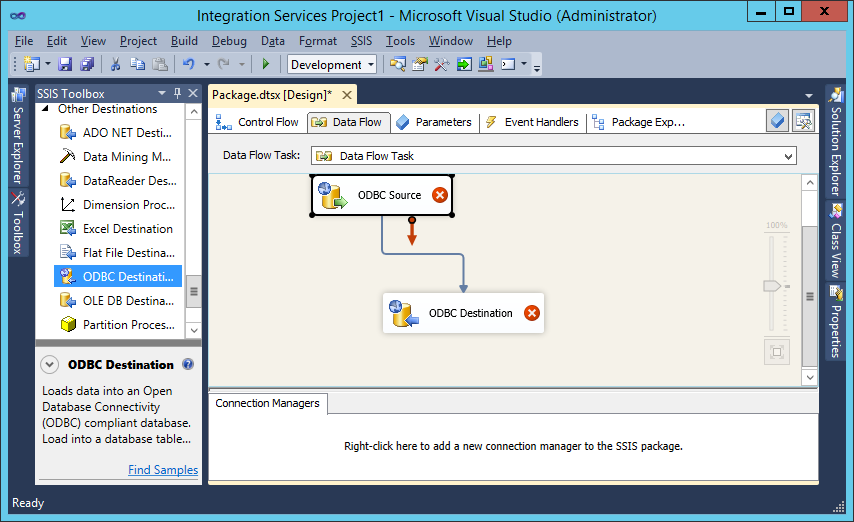

Drag and drop ODBC Data Source and ODBC Destination onto the workspace and connect them with the blue arrow coming from ODBC Source:

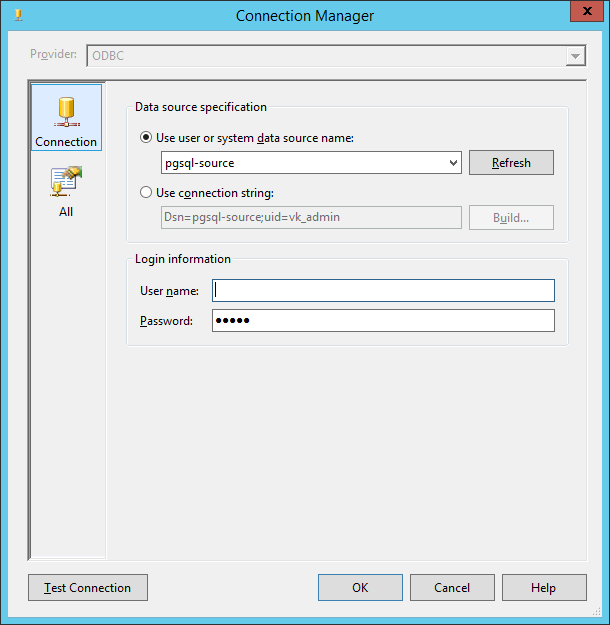

Double-click on ODBC Source, in the window that appears, click New, then New again and select our data source:

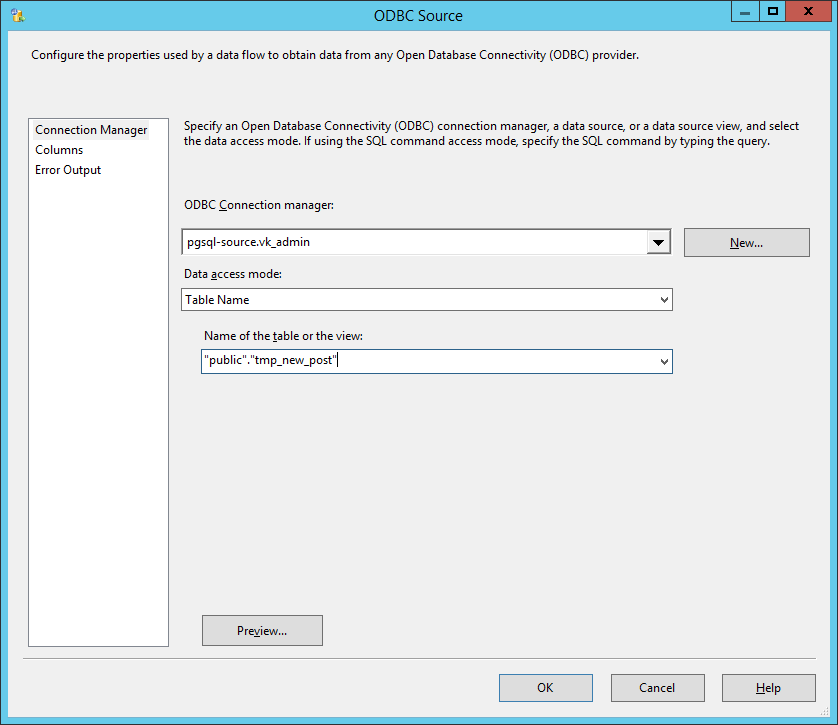

Click Ok twice to close these windows and select the table from which the data will be imported:

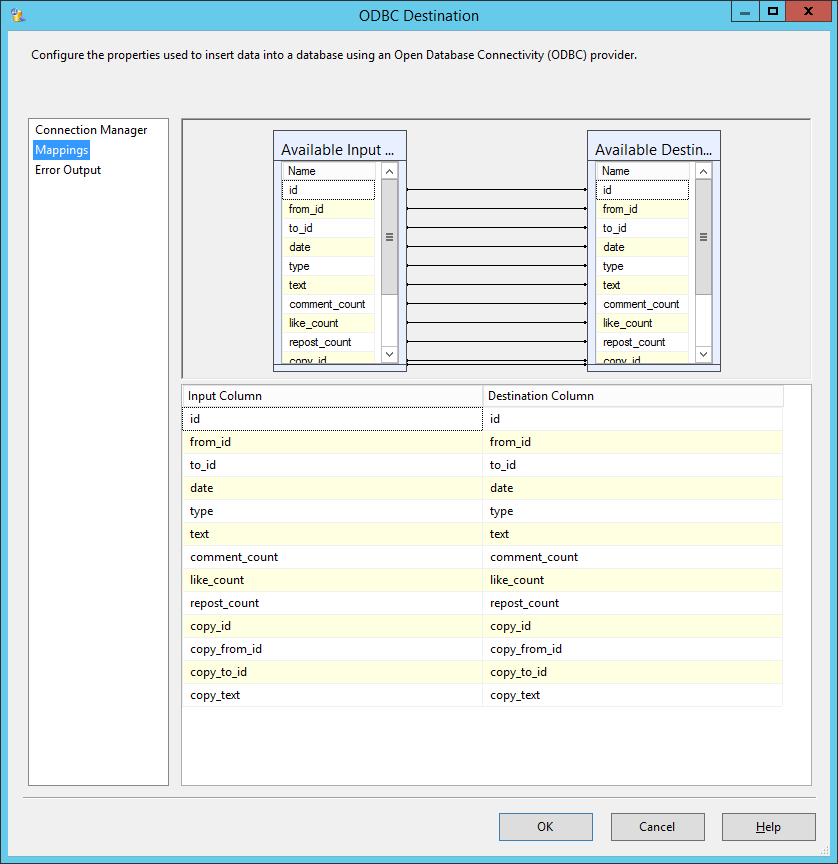

Now you need to configure the data receiver in the same way – ODBC Destination

You can also adjust the column matching if necessary.

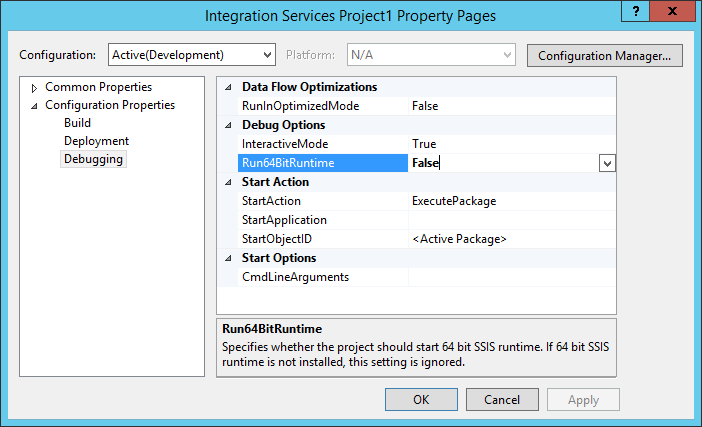

Now another important point – you should switch the project launch to 32-bit mode. To do this, right-click on the project in Solution Explorer, select Properties, and under Configuration Properties -> Debugging set Run64BitRuntime to False.

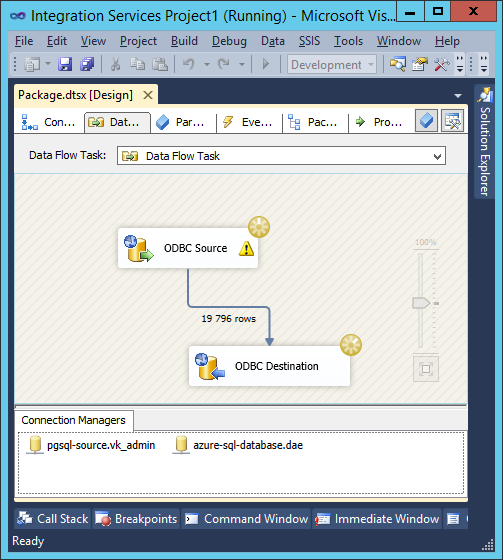

Data import Now everything is ready for data transfer! Press F5 or the “Start Debugging” button on the toolbar. Indicators will appear in the workspace, indicating that data is being transferred:

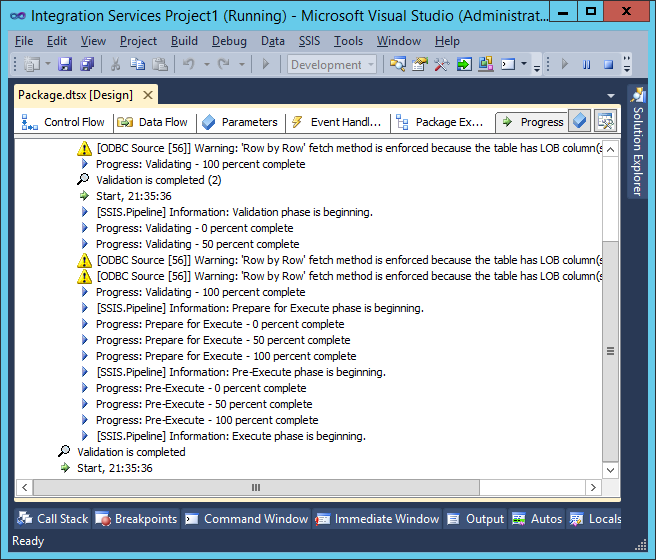

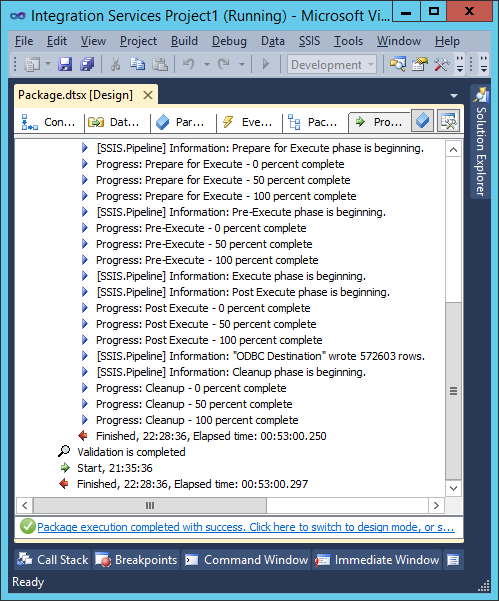

А в панели Progress можно наблюдать текстовый отчет:

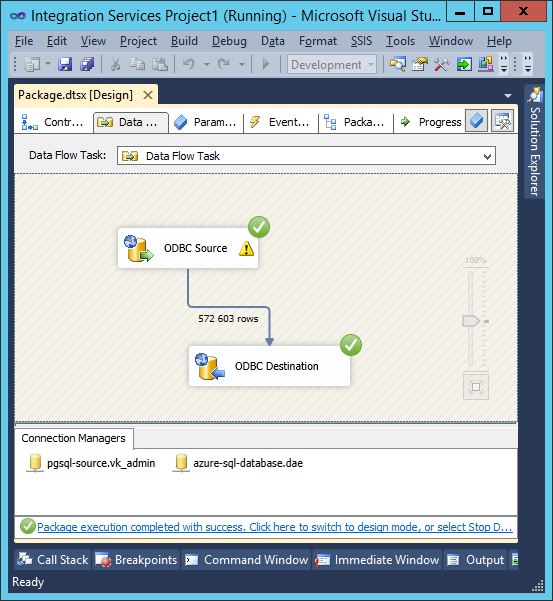

After making sure that everything is going according to plan, you can go to drink coffee / tea – this is a rather long process (in my opinion, SSMA worked faster). After the process is completed, the data source and destination will have green checkmarks:

And in the Progress window – a text report:

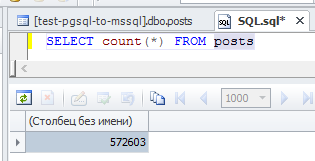

As you can see, 570K lines were copied in 50 minutes. You can connect to the cloud database and make sure that the data is really there:

This is how you can easily and quickly migrate a large amount of data from PostgreSQL to a cloud-based Windows Azure SQL Database.